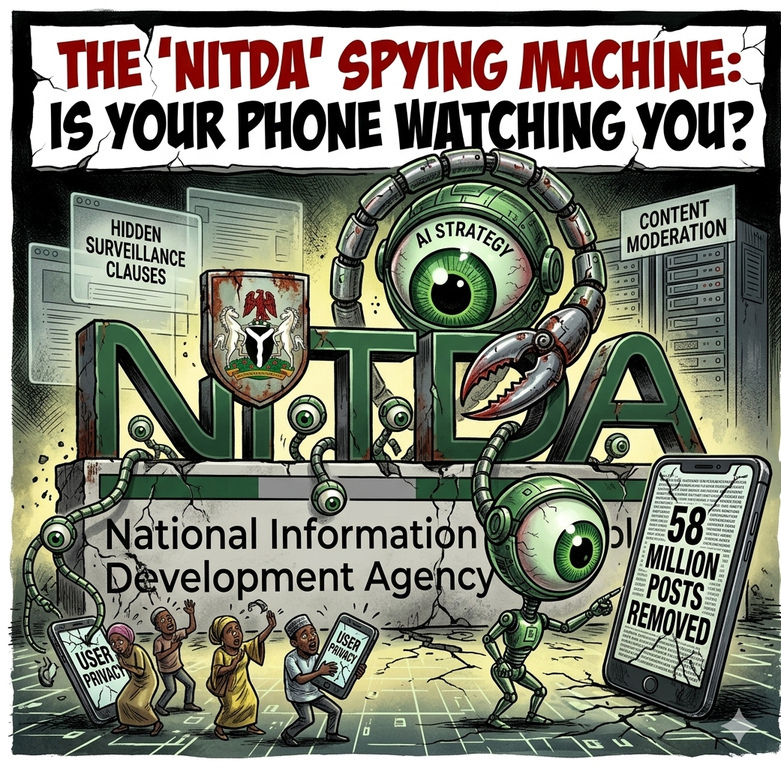

A new wave of concern is sweeping Nigeria’s digital space: Is the government quietly building a surveillance system through NITDA and its AI strategy?

At the center of the debate is the push to regulate artificial intelligence and online platforms. Critics are calling it “hidden surveillance.” Officials call it “responsible digital governance.”

So what is really going on?

The Fear: “They Are Watching Everything”

The anxiety stems from provisions tied to Nigeria’s evolving digital regulations, including:

- AI governance frameworks

- Data protection rules

- Content moderation requirements

Critics argue that these policies could:

- Enable tracking of online behavior

- Force platforms to share user data

- Expand government visibility into private digital activities

The concern is simple: Where does regulation end and surveillance begin?

AI Strategy from NITDA Triggers Content Moderation at Scale

Part of the fear comes from recent figures tied to enforcement.

In 2024 alone, over 58 million online posts were removed across major platforms operating in Nigeria, following regulatory compliance efforts.

Additionally:

- Millions of accounts were deactivated

- Hundreds of thousands of complaints were processed

To critics, these numbers suggest a system capable of large-scale monitoring and control.

The Reality: Platforms, Not Government, Execute Takedowns

However, the actual process tells a different story.

The removals were carried out by private tech companies like Google, TikTok, and LinkedIn — not directly by NITDA.

These actions followed:

- Platform community guidelines

- User complaints

- Compliance with Nigeria’s Code of Practice

The regulation requires platforms to respond to harmful content, not to hand over unrestricted user data.

What the NITDA AI Strategy Actually Says

Nigeria’s National AI Strategy focuses on:

- Economic growth and job creation

- Technological advancement

- Ethical and responsible AI development

- Governance frameworks to guide usage

One key pillar is “responsible and ethical AI”, which includes safeguards around:

- Data privacy

- Algorithmic fairness

- Accountability in AI systems

In other words, the framework is designed to manage risks, not expand unchecked surveillance.

Where the Confusion Comes From

The confusion lies in how modern digital systems work.

AI-driven governance often involves:

- Data analysis

- Pattern detection

- Automated moderation

To the average user, this can feel like constant monitoring, even when it is limited to:

- Public content

- Reported violations

- Platform-level enforcement

The Real Risk: Overreach vs Under-Regulation

Experts say the real issue is not whether regulation exists — but how far it goes.

Too little regulation can lead to:

- Misinformation

- Online fraud

- Exploitation of vulnerable users

Too much regulation can risk:

- Over-censorship

- Reduced freedom of expression

- Potential misuse of authority

Nigeria’s current framework attempts to balance both — but the balance is still evolving.

Legal Safeguards and Limits

Existing rules require:

- Compliance with court orders for data requests

- Defined timelines for content removal

- Complaint and appeal mechanisms for users

Notably, proposals around online harm protection have also emphasized respect for digital rights and freedom of expression, even while addressing harmful content.

Why Nigerians Are Skeptical About the AI Strategy

Public skepticism is not accidental. It is shaped by:

- Past debates over social media regulation

- Concerns about government overreach

- Limited transparency in digital enforcement

In such an environment, even well-intentioned policies can appear suspicious.

Surveillance or Safety Infrastructure?

The truth lies somewhere in between the extremes. Nigeria is building a digital governance system that includes:

- AI oversight

- Content moderation

- Data protection laws

These systems can look like surveillance, but their stated purpose is to:

- Protect users

- Reduce online harm

- Strengthen trust in the digital economy

Conclusion

The claim that NITDA is “spying on Nigerians” via the new AI strategy reflects real concerns about privacy and control in the digital age.

However, current evidence shows a framework focused on regulation, safety, and accountability, not unchecked surveillance.

Still, one thing is clear: Transparency will determine trust. As Nigeria expands its AI and digital policies, citizens will be watching closely — not just what the rules say, but how they are applied in practice.